News from the Level 4 (2) News from the Level 4 (2)

| Robot taxi stranded in the fog . . . |

Level 4 is more common in China and the U.S. than here. While we are referring specifically to Germany here, we do not have a clear picture of whether there are less stringent approval regulations elsewhere in Europe.

Yes, every extension must pass type testing before it can be sold to customers.

Yes, the German authorities have almost become notorious, but even a single fatality—where it can be clearly proven that it would almost certainly

not have happened if a human driver had been at the wheel—is enough to bring the entire industry into disrepute.

As I said, we would be happy if Level 3 had at least been achieved somewhere in the private sector. Instead, they resort to using a plus sign after the term 'Level 2'. Even when changing lanes, the car must not

be allowed to make the decision entirely on its own.

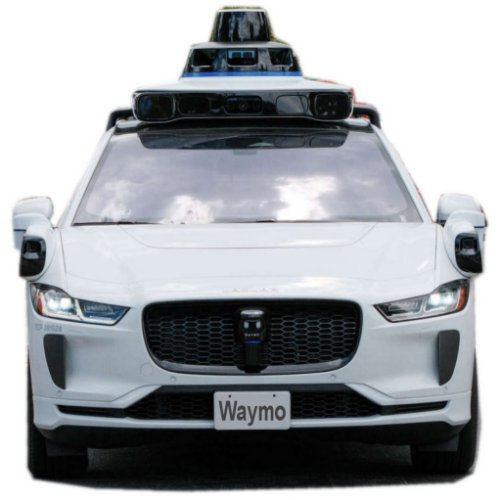

You’d bet that the systems are capable of more, they’re just not allowed to show it. Take a look at the progress made in Light Detection and Ranging. The basic principle:

A laser pulse (a laser beam that lasts for a very short time) is emitted. For example, it encounters a wall of fog.

If it isn't too dense, it can penetrate it. However, there is still radar on board for thicker fog. Lasers are better than cameras, for example because they are a so-called active medium. In principle, cameras can only produce

flat (two-dimensional) images.

So a laser pulse has passed through the (lighter) fog without being affected and hits an object. There are now three possibilities: the beam is reflected (reflection), absorbed by the object, or

converted into heat (absorption).

However, it can also be scattered in many directions by the object (scattering), although, of course, the greatest emphasis is placed on the returning portion. Just as with light,

these are always photons that lay back these distances at the speed of light.

Since we know the speed, we can use the time to calculate the distance traveled. In other words, recognized objects do not exist side by side as in a camera image, but instead provide a

measured depth, in principle, an exact 3D image.

Lidar operates using its own power, whereas a camera relies on the available light. This is also why it penetrates better, for example, through fog. Some lidar manufacturers also offer software, for example, from

Nvidia, to enable the direct identification of object types.

In the automotive sector, a wavelength of 905 nanometers in the near-infrared range has become the standard. Only a few models use a wavelength of 1,550 nanometers, which requires more expensive components.

The laser beam is not entirely harmless to the human eye.

You should not look into the laser beam even when the vehicle is stationary, because it may not shut off reliably. However, the emitted laser light is also subject to legal regulations. For cameras, it is sometimes said to be

even more harmful than for the eyes.

Lidar's overall efficiency is mainly determined by the pulse rate. However, a long range of up to 300 meters results in lower resolution, which also makes object detection more difficult. In addition,

there is the energy per pulse, for which legal regulations (regarding the eyes) must be observed.

And then there are also different approaches to scanning the surroundings. By the way, it seems the old, fully circular sensors are no longer available, which is why you need at least one each for the front and back, plus

four at all four corners.

|