Mobileye 1 Mobileye 1

Prof. Amnon Shashua is both President and CEO of Mobileye and Senior Vice President of Intel, which demonstrates the close connection between the two companies. Mobileye covers the entire spectrum from driver

assistance to fully autonomous driving. Mobileye, you may recall, is the company Tesla initially collaborated with. The separation probably came after one of the first accidents with Teslas, which were a little too

autonomous on the road. Now Tesla is continuing to develop on its own.

The requirements and orders are becoming more numerous and complex every year. 17.4 million chips were delivered in 2019, equipping 54 million vehicles with IQ chips since 2007. Here, we will only mention the major

challenges and how Mobileye and Intel are addressing them. The situation is characterized by the opportunities available now and in the future. There, the aim is to create a system with only one serious fault per

10,000 hours of operation.

If that's not daring enough, a ready-made package consisting of sensors, cables, and evaluation software is to be put together (for $5,000). This transforms an ordinary consumer car into a self-driving vehicle where the

driver can relax on the back seat. The robo-taxi, which was supposed to be introduced earlier, is repeatedly being discussed, with equipment and software not only for in-house development, but also for other

manufacturers of such vehicles.

So much for the visions. The next planned steps are much more concrete. Since Mobileye has probably also realized that the step from Level 2 to Level 3 is quite a big one, they now favor '2+'. This means that, first of all, the

equipment will be upgraded. Instead of just one front camera, there are several. They would be pretty much the most cost-effective sensor technology for an assistance system. A high-resolution map would then be added.

This could extend the relative absence of the driver from the driving process to several minutes even.

And then there is the group's foresight in opening up new business areas related to mobility and software. First, there is the term 'back office', which refers to the part of a company that is not part of its core business, but

serves to maintain it. And then, of course, fleet management and optimization. The extent to which this also applies to taxi services and thus to Uber is not entirely clear.

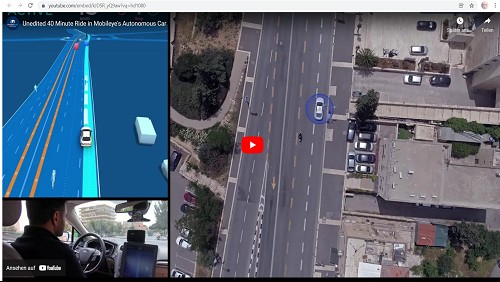

The workings of cameras are described with astonishing precision. It starts with the images generated, which are sent to a cloud at a rate of only 10 kB speed per kilometer and are presumably processed there. Ultimately,

all the submissions will result in a high-resolution image. Surprisingly, the road surface is green, making it easier to distinguish between markings and road users. The latter are already categorized. We've seen that many

times before, when strange boxes are drawn around them.

When searching for a drivable route, it is important not to rely solely on the marked tracks, but to explore the surrounding area as well. That's why we came up with the idea of using every available camera, including those

that create an image around the car. This is where the physical limitations of the road become apparent. Hopefully, intelligent software will then mean that tram tracks will no longer be used for guidance. Do we have

everything? Of course not, because there is still important infrastructure such as traffic signs and traffic lights.

Redundancy is considered a very important keyword. This is possible if radar, ultrasound, laser, and lidar are added, which, incidentally, are not considered necessary for Level 2+. Then the different systems should be

evaluated separately and subsequently compared later. It could then be decided, for example, that if two systems arrive at the same result, the system that arrives at a different result is ignored, two or three against one.

Such a strict separation is also required when evaluating only image material. So, apply a specific sequence of algorithms to each one and only compare them afterwards. Please keep in mind the incredibly low error rate

that we have ultimately set as our target.

It's hard to believe that such a huge collection of pixels has so many different evaluation possibilities from the ground up. First, there is the perception of the points themselves. That reminds me of the painting of the

peasant girl with a cow in the Kröller-Müller Museum (NL). The cow was made up of dots. You could only recognize it if you took a few steps back.

One can also focus more on color edges and recognize assignable shapes from them. Humans are accustomed to categorizing their 2D images in a 3D world. With multiple cameras, you can determine 3D directly and

also perform measurements in such a system. Let's take a look at the technology. There are already vehicles equipped with so-called trifocal sensors. Research shows that, unlike the familiar glass lenses in progressive

glasses, multifocal lenses cover both near and far distances without changing the direction of view. Appropriately flexible lenses can be implanted nowadays.

Let's take a look at one area that users encounter in traffic. For example, a bus was recorded multiple times. One way of capturing this would be the aforementioned framing and comparison with categorized images of

buses. Another algorithm captures the bus in its environment as a large part, filling the entire image depending on the image. Then the green color mentioned earlier comes into play, which distinguishes all objects

traveling on it. Simply comparing it with other road users could identify the bus. In addition, one could analyze the details of the bus, e.g., that unlike a closed truck, the city bus has its front wheels positioned quite far back.

There are even more possible ways to process images using algorithms, which Mobileye will certainly not reveal to us in detail. The only important thing is that everyone must be guided to the end and no results may be

taken over in the meantime. Finally, you can check which studies have come to a common conclusion.

What we can already see from the green color of the road surface is the distinction between different objects by color. At first, the system cannot distinguish between pedestrians, but as they move, they gradually take on

different colors. Software can even recognize certain postures of people with regard to their limbs. A child who is ready to run after the ball could then be distinguished from one standing quietly at the side of the road.

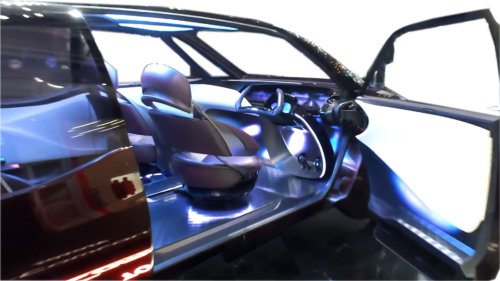

Three-dimensional surveying using images from different cameras is now in some cases better than what the human eye is capable of. Moving objects are also recognized as parts of vehicles, e.g., doors. The 3D image

no longer has to be taken by a stereo camera. It is amazing that with the help of several cameras mounted all around, a model of the wider surroundings can also be created.

Once this technology is fully developed, it should no longer be possible, for example, for an autonomous vehicle to wait behind a parked car. Instead, the enhanced model will recognize the car's surroundings and

understand that it is parked and not waiting at a red light. Finally, here is a video of a 40-minute drive through Jerusalem. Professor Shashua considers the traffic there to be significantly worse than in Boston.

kfz-tech.de/YAu14

|